When Good CA's go Bad: Other Things to Check in Your Datacenter

The recent problems at DigiNotar (and now GlobalSign) has gotten a lot of folks thinking about what happens when significant events impact our trust of Public Certificate Authorities, and how it affects users of secured services. But aside from the browsers at the desktop, what is affected and what should we look at in our infrastructure?

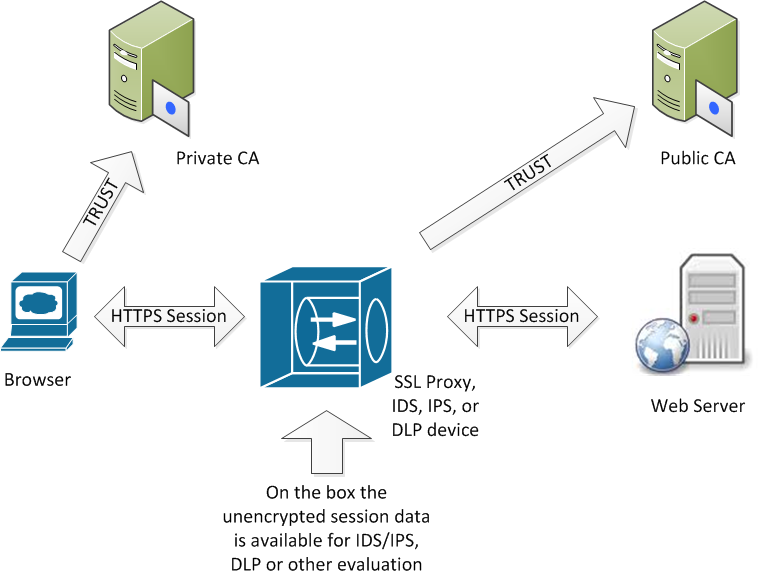

What has been brought up by several of our readers, as well as a lively discussion on several of the SANS email lists, is SSL proxy servers, and any other IDS / IPS device that does "SSL proxy" encryption. If you are not familiar with this concept, in a general way these products work as shown in the diagram:

As you can see from the diagram, the person at the client workstation "sees" an HTTPS browser session with a target webserver. However, the client's HTTPS session is actually with the proxy box (using the client's trust in the Private CA to cut a dynamic cert), and the HTTPS session with the target web server is actually between the proxy and the web server, not involving the client at all.

In many cases, the private CA for this resides directly on the proxy hardware, allowing certs to be issued very quickly as the clients browse.

In any case, the issue that we're seeing is that these units are often not patched as rapidly as servers and desktops, so many of these boxes remain blissfully unaware of all the issues with DigiNotar. If you have an SSL proxy server (or an IDS / IPS unit that handles SSL in this way), it's a good idea to check the trusted CA list on your server, and also check for any recent patches or updates from the vendor.

It's probably a good time to do some certificate "housekeeping" - - look at all devices that use public, private or self-signed certificates. Off the top of my head, I'd look at any web or mail servers you might have with certificates, load balancers you have in your web farm that might front-ending any HTTPS web servers, any FTP servers or SSH servers that might use public certificates, or any SSL VPN appliances. What should you look for? Make sure that you're using valid private or public certificates - not self-signed certificates for anything (this is especially common for admin interfaces for datacenter infrastructure). It often makes sense at a time like this to see if it makes sense in your organization to get all your certs with one vendor, in one contract on a common renewal date to simplify the renewals and ensure that nothing gets missed, resulting in an expired cert facing your clients. Or it may make sense to see if it's time to consider an EV (extended validation) cert on some servers, or downgrading an existing EV cert to a standard one. (Look for more on CA nuts and bolts in an upcoming diary). Check renewal dates to ensure that you have them all noted properly. If you've standardized on 3 year certificates, has one of your admins slipped a 1 year cert in by accident (we see this all the time, often a 1 year cert is less than the corporate PO limit, and a 2 or 3 year cert is over).

What else should you check? What other devices in the datacenter can you think of that needs to trust a public CA? Mail servers come to mind, but I'm sure that there are others in the mix - please use our comment form to let us know what we've missed.

===============

Rob VandenBrink

Metafore

How Makers of Web Browsers Include CAs in Their Products

Since Certificate Authorities (CAs) are on many people's minds nowadays, we asked @sans_isc followers on Twitter:

How do browser makers (Microsoft, Mozilla, Google, Opera) decide which CAs to put into the product?

Several individuals kindly provided us with pointers to the vendors' documentation that describe their processes for including CAs in web browser distributions:

- Microsoft describes its Root Certificate Program (thanks, @leftistqueer)

- Mozilla maintains a CA Certificate Inclusion Policy (thanks, @ypatiadotca and @rik24d)

- Apple documents the requirements for its Root Certificate Program (thanks, @GothAlice)

- Opera clarifies how to get a root certificate included in its browser (thanks, @Chasapple)

If you have a pointer to Google Chrome certificate-inclusion practices, please let us know.

-- Lenny

Lenny Zeltser focuses on safeguarding customers' IT operations at Radiant Systems. He also teaches how to analyze and combat malware at SANS Institute. Lenny is active on Twitter and writes a daily security blog.

Should We Still Test Patches?

I know, I know, this title sounds like heresy. The IT and Infosec villagers are charging up the hill right now, forks out and torches ablaze! I think I can hear them - something about "test first, then apply in a timely manner"?? (Methinks they weren't born under a poet's star). While I get their point, it's time to throw in the towel on this I think.

On every security assessment I do for a client who's doing their best to do things the "right way", I find at least a few, but sometimes a barnful of servers that have unpatched vulnerabilties (and often are compromised).

Really, look at the volume of patches we've got to deal with:

From Microsoft - once a month, but anywhere from 10-40 in one shot, every month! Since the turnaround from patch release to exploit on most MS patches is measured in hours (and is often in negative days), what exactly is "timely"?

Browsers - Oh talk to me of browsers, do! Chrome is releasing patches so quickly now that I can't make head or tails of the version (it was 13.0.782.220 today, yesterday is was .218, the update just snuck in there when I wasn't looking). Firefox is debating removing their version number from help/about entirely - they're talking about just reporting "days since your last confession ... er ... update" instead (the version will still be in the about:support url - a nifty page to take a close look at once in a while). IE reports a sentance-like version number similar to Chrome.

And this doesn't count email clients and severs, VOIP and IM apps, databases and all the stuff that keeps the wheels turning these days.

In short, dozens (or more) critical patches per week are in the hopper for the average IT department. I don't know about you, but I don't have a team of testers ready to leap into action, and if I had to truly, fully test 12 patches in one week, I would most likely not have time to do any actual work, or probably get any sleep either.

Where it's not already in place, it's really time to turn auto-update on for almost everything, grab patches the minute they are out of the gate, and keep the impulse engines - er- patch "velocity" at maximum. The big decision then is when to schedule reboots for the disruptive updates. This assumes that we're talking about "reliable" products and companies - Microsoft, Apple, Oracle, the larger Linux distros, Apache and MySQL for example - people who *do* have a staff who is dedicated to testing and QA on patches (I realize that "reliable" is a matter of opinion here ..). I'm NOT recommending this for any independant / small team open source stuff, or products that'll give you a daily feed off subversion or whatever. Or if you've got a dedicated VM that has your web app pentest kit, wireless drivers and 6 versions of Python for the 30 tools running all just so, any updates there could really make a mess. But these are the exceptions rather than the rule in most datacenters.

Going to auto-pilot is almost the only option in most companies, management simply isn't paying anyone to test patches, they're paying folks to keep the projects rolling and the tapes running on time (or whatever other daily tasks "count" in your organization). The more you can automate the better.

Mind you, testing large "roll up" patch sets and Service Packs is still recommended. These updates are more likely to change operation of underlying OS components (remember the chaos when packet signing became the default in Windows?).

There are a few risks in the "turn auto-update on and stand back" approach:

- A bad patch will absolutely sneak in once in a while, and something will break. For this, in most cases, it's better to suck it up for that one day, and deal with one bad patch per year as opposed to being owned for 364 days. (just my opinion mind you)

- If your update source is compromised, you are really and truly toast - look at the (very recent) kernel.org compromise ( http://isc.sans.edu/diary.html?storyid=11497 ) for instance. Now, I look at a situation like that, and I figure - "if they can compromise a trusted source like that, am I going to spot their hacked code by testing it?" Probably not, they're likely better coders than I am. It's not a risk I should ignore, but there isn't much I can do about it, I try really hard to (ignore it

What do you think? How are you dealing with the volume of patches we're faced with, and how's that workin' for ya? Please, use our comment form and let us know what you're seeing!

===============

Rob VandenBrink

Metafore

Comments