Quick Password Brute Forcing Evolution Statistics

We have collected SSH and telnet honeypot data in various forms for about 10 years. Yesterday's diaries, and looking at some new usernames attempted earlier today, made me wonder if botnets just add new usernames or remove old ones from their lists. So I pulled some data from our database to test this hypothesis. I didn't spend a lot of time on this, and this could use a more detailed analysis. But here is a preliminary result:

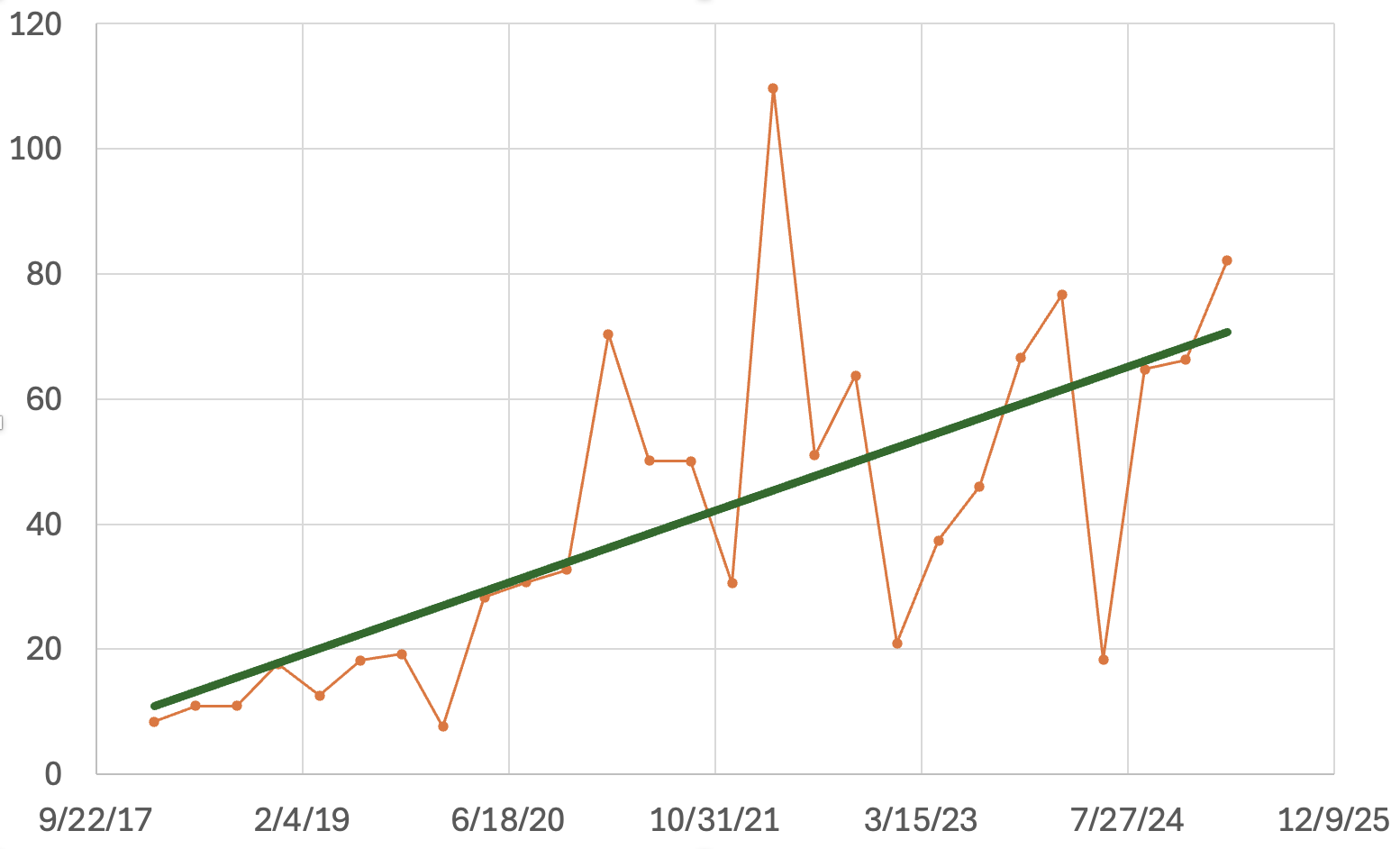

The average username/password combinations has increased, but not steadily. I only went back to 2018 as we have more consistent data starting in 2018. Scans began with about 10-20 username/password combinations attempted from each source IP, and more recently, bots attempted around 50 username/password combinations.

The graph shows each source IP address's average username/password combination count.

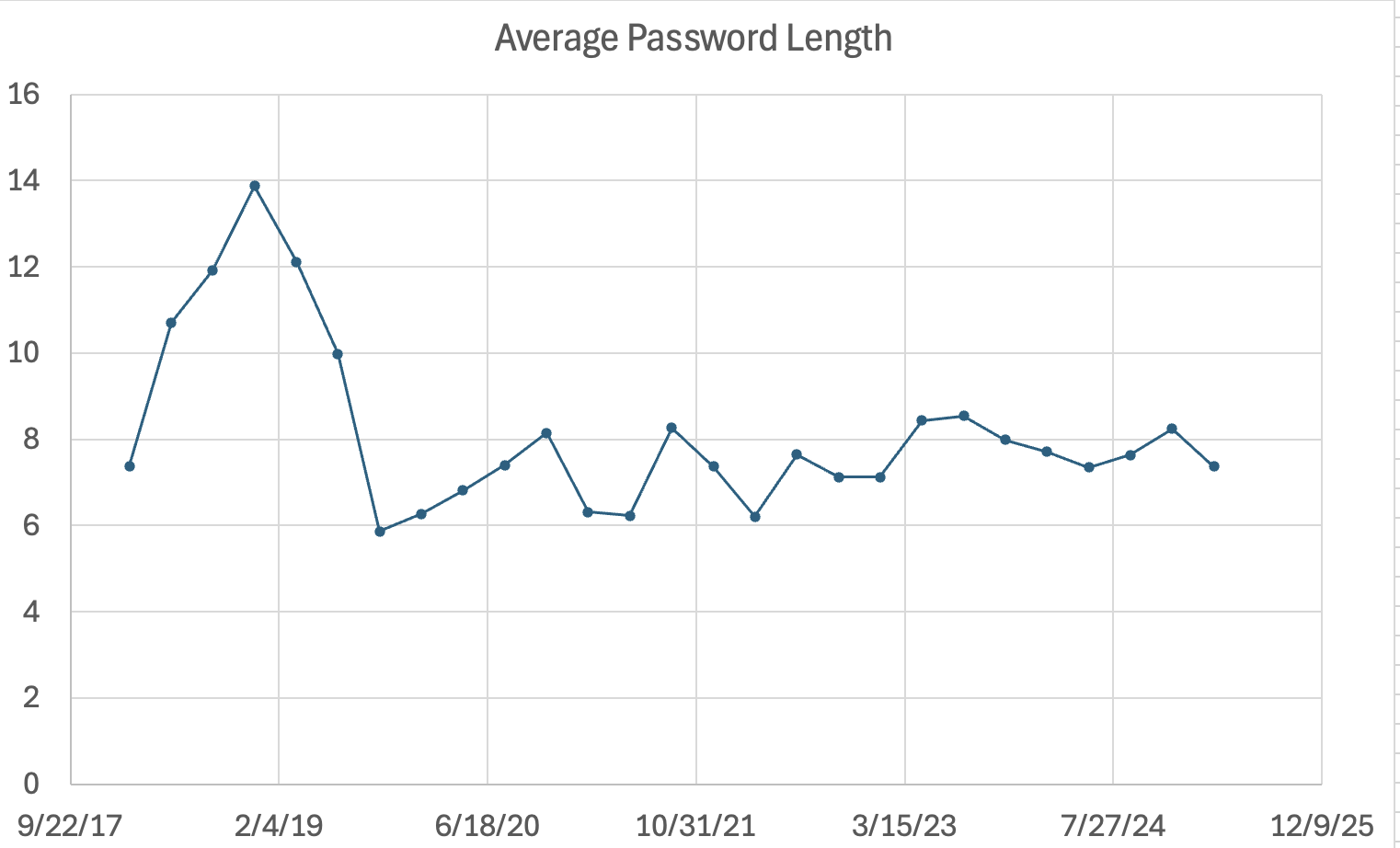

Next, I checked to see if the average password length had changed. Interestingly, there was a "peak" in password length around late 2018/early 2019. But otherwise, it has been very steady at around eight characters. Password brute forcing from bots like Mirai is not about password complexity. We have complex default passwords, like 3245gs5662d34, that are regularly in our top ten scanned passwords because this password is a well-known default password for a popular video camera system. Many default passwords are around eight characters long (like "password"), which explains the popularity of passwords around that length.

---

Johannes B. Ullrich, Ph.D. Dean of Research, SANS.edu

Twitter|

Comments