Dealing with Disaster - A Short Malware Incident Response

I had a client call me recently with a full on service outage - his servers weren't reachable, his VOIP phones were giving him more static than voice, and his Exchange server wasn't sending or receiving mail - pretty much everything was offline.

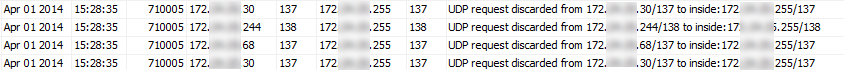

I VPN'd in (I was not onsite) and started with the firewall, because things were bad enough that's all I could initially get to from a VPN session. No surprise, I saw thousands of events per second flying by that looked like:

So right away this looks like malware, broadcasting on UDP ports 137 and 138 (netbios name services and datagrams). You''ll usually have some level of these in almost any network, but the volumes in this case where high enough to DOS just about everything, I was lucky to keep my SSH sessions (see below) going long enough to get things under control. And yes, that was me that was behind Monday's post on this if this sounds familiar

To get the network to some semblance of usability, I ssh'd to each switch in turn and put broadcast limits on each and every switch port:

On Cisco:

interface gigabitethernet0/x

storm-control broadcast level 20 (for 20 percent)

or

storm-control broadcast level pps 100 (for packets per second)

On HP Procurve:

interface x

broadcast-limit 5 (where x is a percentage of the total possible traffic)

On HP Comware:

int gig x/0/y

broadcast-suppression pps 200

or

storm-constrain broadcast pps 200 200

(you can do these in percent as well if you want)

Where I can, I try to do this in packets per second, so that the discussion with the client can be "of course we shut that port down - there's no production traffic in your environment that should generate more than 100 broadcasts per second."

With that done, I now could get to the syslog server. What we needed was a quick-and-dirty list of the infected hosts, in order of how much grief they were causing.

First, let's filter out the records of interest - everything that has a broadcast address and a matching netbios port in it - it's a Windows host, so we'll use windows commands (plus some GNU commands):

type syslogcatchall.txt | find "172.xx.yy.255/13"

But we don't really want the whole syslog record, plus this short filter still leaves us with thousands of events to go through

Let's narrow this down:

first, let's use "cut" to pull out the just source IP out of these events of interest.

cut -d " " -f 7

Unfortunately, that field also includes the source port, so let's remove that by using "/" as the field delimeter, and take only the source ip address (field one)

cut -d "/" -f 1

Use sort and uniq -c (the -c gives you a count for each source ip)

then use sort /r to do a reverse sort based on record count

Putting it all together, we get:

type syslogcatchall.txt | find "172.xx.yy.255/13" | cut -d " " -f 7 | cut -d "/" -f 1 | sort | uniq -c | sort /r > infected.txt

This gave us a 15 line file, sorted so that the worst offenders were at the top of the list, with a record count for each. My client took these 15 stations offline and started the hands-on assess and "nuke from orbit" routine on them, since their AV package didn't catch the offending malware.

What else did we learn during this incident?

- Workstations should never be on server VLANs.

- Each and every switch port needs basic security configured on it (broadcast limits today)

and, in related but not directly related lessons ...

- Their guest wireless network was being used as a base for torrent downloads

- Their guest wireless network also had an infected workstation (we popped a shun on the firewall for that one).

- Their syslog server wasn't being patched by WSUS - that poor server hadn't seen a patch since December's patch Tuesday

What did we miss?

By the time I got onsite, the infected machines had all been re-imaged, so we didn't get a chance to assess the actual malware. We don't know what it was doing besides this broadcast activity, and don't have any good way if working out for sure how it got into the environment. Though since this distilled down to one infected laptop, my guess would be this is malware that got picked up at home, but that's just a guess at the moment.

Just a side note, but an important one - cut, uniq, sed and grep are all on your syslog server if it's a *nux host, but if you run syslog on Windows, these commands are still pretty much a must-have. As you can see, with these commands we were able to distill a couple of million records down to 15 usable, actionable lines of text within a few minutes - a REALLY valuable toolset and skill to have during an incident. Microsoft provides these tools in their "SUA" - Subsystem for Unix Based Applications, which has been available for many years and for almost every version of Windows.

Or if you need to drop just a few of these commands on to a server during an incident, especially if you don't own that server, you can get what you need from gnutools (gnuwin32.sourceforge.net) - I keep this toolset on my laptop for just this sort of situation.

Once you get the hang of using these tools, you'll find that your fingers will type "grep" instead of find or findstr in no time!

===============

Rob VandenBrink

Metafore

Comments