Certificate Errors in Office 365 Today

It looks like there's a mis-assignment of certificates today at Office 365. After login, the redirect to portal.office.com reports the following error:

portal.office.com uses an invalid security certificate.

The certificate is only valid for the following names: *.bing.com, *.platform.bing.com, bing.com, ieonline.microsoft.com, *.windowssearch.com, cn.ieonline.microsoft.com, *.origin.bing.com, *.mm.bing.net, *.api.bing.com, ecn.dev.virtualearth.net, *.cn.bing.net, *.cn.bing.com, *.ssl.bing.com, *.appex.bing.com, *.platform.cn.bing.com

Hopefully they'll have this resolved quickly. Thanks to our reader John for the heads-up on this!

======================================================

UPDATE (4pm EST)

Looks like this has been resolved - note from Microsoft:

Closure Summary: On Thursday, July 10, 2014, at approximately 3:57 AM UTC, engineers identified an issue in which some customers may have encountered intermittent certificate errors when navigating to the Office 365 Customer Portal. Investigation determined that a recent update to the environment caused impact to a limited portion of capacity which is responsible for handling site certificate authorization. Engineers reconfigured settings to correct the underlying issue which mitigated impact. The issue was successfully fixed on Thursday, July 10, 2014, at 5:54 PM UTC.

Great job guys - thanks much !

===============

Rob VandenBrink

Metafore

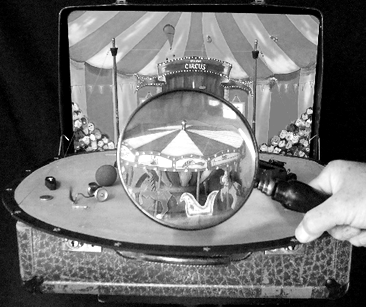

Finding the Clowns on the Syslog Carousel

So often I see clients faithfully logging everything from the firewalls, routers and switches - taking terabytes of disk space to store it all. Sadly, the interaction after the logs are created is often simply to make sure that the partition doesn't fill up - either old logs are just deleted, or each month logs are burned to DVD and filed away.

The comment I often get is that logs entries are complex, and that the sheer volume of information makes it impossible to make sense of it. With 10's, hundreds or thousands of events per minute, the log entries whiz by at a dizzying speed. Just deciding to review logs can be a real time-eater, unless you use methods to distil how you find the "clowns" on the carousel so you can deal with them appropriately.

(not to scale)

The industry answer to this is to install a product. You can buy one of course, or use free tools like Bro, ELSA, Splunk (up to a certain daily log volume) which can all do a good job at this. Netflow solutions will also do a great job of categorizing traffic up pictorially.

But what if you don't have any of that? Or what if you've got a few hundred gigs of text logs, and need to solve a problem or do Incident Handling RIGHT NOW?

Let's look at a few examples of things you might look for, and how you'd go about it. I'll use Cisco log entries as an example, but aside from field positions, you can apply this to any log entry at all, including Microsoft events that have been redirected to syslog with a tool such as snare.

First, let's figure out who is using DNS but is NOT a DNS server?

type syslogcatchall.txt | grep "/53 " | grep -v a.a.a.b | grep -v a.a.a.c

Where a.a.a.b and a.a.a.c are the "legit" internal DNS servers. We're using "/53 ", with that explicit trailing space, to make sure that we're catching DNS queries, but not traffic on port 531, 532, 5311, 53001 and so on.

That leaves us with a bit of a mess - wa-a-ay too many records and the text is just plain too tangled to deal with. Let's just pull out the source IP address in each line, then sort the list and count the log entries per source address - note that we're using a Windows Server host, with the Microsoft "Services for Unix" installed. For all the *nix purists, I realize this could be done simpler in AWK, but that would be more difficult to illustrate. If anyone is keen on that, by all means post the equivalent / better AWK syntax in our comment form - or perl / python or whatever your method is - the end goal is always the same, but the different methods of getting there can be really interesting!

Anyway, my filtering command was:

D:\syslog\archive\2014-07-03>type SyslogCatchAll.txt | grep -v a.a.a.b | grep -v a.a.a.c | grep "/53 " | sed s/\t/" "/g | cut -d " " -f 13 | grep inside | sed s/:/" "/g | sed s/\//" "/g | cut -d " " -f 2 | sort | uniq -c | sort /R

This might look a little complicated, but let's break it up.

| grep -v a.a.a.b | grep -v a.a.a.c | remove all the records from the two "legit" DNS Servers |

| grep "/53 " | We're looking for DNS queries, which includes traffic with destination ports of TCP or UDP port 53. Note again the trailing space. |

| sed s/\t/" "/g | convert all of the tab characters in the cisco syslog event line to a space. This mixing of tabs and spaces is typical in syslogs, and can be a real challenge in splitting up a record for searches. |

| cut -d " " -f 13 | using the space character as a delimeter, we just want field 13, which will look like "interface name/source ip address:53" |

| sed s/:/" "/g | sed s/\//" "/g | change those pesky ":" and "/" characters to spaces |

| cut -d " " -f 2 | pull out just the source address |

| sort | uniq -c | finally, sort the resulting ip addresses, and count each occurence |

| sort /R | sort this final list by count in descending order. Note that this is the WINDOWS sort command. In Linux, you would use "sort -rn" |

The final result is this, the list of hosts that are sending DNS traffic, but are not DNS servers. So either they're misconfigured, or they are malicious traffic using UDP/53 or TCP/53 to hide from detection

525 10.x.z..201

182 10.x.y.236

115 10.x.z.200

40 10.x.y.2

34 10.x.y.38

20 10.x.y.7

20 10.x.y.118

2 10.x.x.138

2 10.x.x.137

2 10.x.x.136

2 10.x.x.135

2 10.x.x.133

2 10.x.x.132

2 10.x.x.131

So what did these turn out to be? The first few are older DNS servers that were supposed to be migrated, but were forgotten - this was a valuable find for my client. The rest of the list is mostly misconfigured in many cases they were embedded devices (cameras, timeclocks and TVs) that were installed by 3rd parties, with Google's DNS hard coded. A couple of these stations had some nifty malware, running botnet C&C over UDP port 53 to masquerade as DNS. All of these finds were good things for my client to find and deal with!

What else might you use this for? Search for tcp/25 to find hosts that are sending mail directly out that shouldn't (we found some of the milling machinery on the factory floor that was also happily sending SPAM), or tcp/110 for users who are using self-installed email clients

If you are using a proxy server for internet control, it's useful to find workstations that have incorrect proxy settings - in other words, find all the browser traffic (80, 443, 8080, 8081, etc) that is NOT using proxy.

SSH, Telnet, tftp, ftp, sftp and ftps are other protocols that you might be interested in, as they are common protocols to send data in or out of your organization.

VPN and other tunnel traffic is another traffic type that you should be looking at for analysis. Various common VPN protocols include:

IPSEC is generally some combination of:

ESP - IP Protocol 50. For this you would look for "ESP" in your logs - it's not TCP or UDP traffic at all.

ISA udp/500

IPSEC can be encapsulated in UDP, commonly in udp/500 and/or udp/4500, though really you can encapsulate using any port, as long as the other end matches. You can also encapsulate in tcp, many VPN gateways default to tcp/10000., but that's just a default, it could be anything.

GRE (Cisco's Generic Routing Encapsulation) - IP Protocol 47

Microsoft PPTP - TCP/1723 plus IP protocol 47

What else might you look for? How about protocols that encapsulate IPv6?Teredo / 6to4 is the tunneling protocl that Microsoft uses by default - IP protocol 41 (see https://isc.sans.edu/diary/IPv6+Focus+Month%3A+IPv6+Encapsulation+-+Protocol+41/15370 )

If you've got a list of protocols of interest, you can easily drop all of these in a single script and run them at midnight each day, against yesterday's logs.

Using just CLI tools, what clowns have you found in your logs? And what commands did you use to extract the information?

===============

Rob VandenBrink

Metafore

Comments